Exciting times in VMware! after almost 20 years of virtualization, the hypervisor is being revolutionized to an all-new thing. Kubernetes is baked into the heart of vSphere and now we can finally leverage a new-gen API layer to consume workloads from the infrastructure.

Kubernetes is a platform to run platforms, not just workloads. Kubernetes core is based on objects, that is being declared by file and controllers. you create an object, let’s say Deployment, and Kubernetes will keep that object running exactly according to the intended state in the initial deceleration file. Kubernetes can also introduce new objects (CRD – Custome resource definition) and controllers to manage those new objects as well.

The way we baked Kubernetes into our hypervisor allows us to declare new object like virtual machines, virtual images, policies etc. and manage any type of workload running on our platform under the same control plane.

Overview

During VMworld 2019 we announced project Pacific, the idea of this project is to bring two worlds closer the Developers/DevOps eng. and the IT/Operations teams. The project transforms the vSphere layer and makes it the best platform to run and manage Kubernetes workloads in the hypervisor layer and on top of it.

The foundation of Project Pacific is VMware’s SDDC with our Cloud Foundation stack which build of vSphere as the compute layer, NSX for networking & security and vSAN for the storage, rapped with what we call SDDC manager to manage the life-cycle of everything from the hardware up.

One of the interesting aspects of vSphere with Tanzu is the ability to leverage Kubernetes control plane to manage VMware SDDC objects. DevOps team can now deploy hybrid applications (VMs and PODs) with declarative YAML files, while in parallel the VI admin can have better visibility into the Kubernetes world via the same vSphere UI and enforce control boundary of the compute resources the DevOps can consume.

Why we need a new control plane to VMware SDDC?

Well, most of today’s applications are hybrid there will always be application running on different objects like virtual machines and physical servers along side the containers.

With Tanzu we can now deploy a hybrid application with a single declarative YAML file that describes the desired state of the app.

Namespace

In Kubernetes, we have a logical boundary of a namespace. Namespace allows us to create a tenancy construct inside the cluster, we can divide the cluster user space and storage space and have a dedicated environment for specific development team.

Tanzu adds namespace construct directly into vSphere. For example, a hybrid application which is shown below consists of Kubernetes objects and vSphere VMs objects, all placed into a single namespace visible in the vCenter UI.

Now we can apply resource like compute quota (CPU and Memory), Network & Access policy and permission policies to this new unified namespace.

The Supervisor Cluster

Enabling Tanzu service in vSphere will create a Kubernetes control plane in vSphere, you can think of it as vSphere on Steroids 🙂

The first step is to deploy new demon inside the ESXi host called Spherelet, for those who are familiar with Kubernetes this is the equivalent to kubelet. By running the Spherelet demon in the ESXi it act’s as Kubernetes Worker node. Spherelet is an agent running inside the ESXi host and responsible for the health of the Kubernetes POD’s that will run inside the ESXi host, Spherelet monitors the PODs status and manages the lifecycle of the PODs aka start, restarts or kill the PODs if necessary. Another job of Spherelet is to “watch” the Kubernetes API server (explain later) and see if their new PODs that need to deploy.

The next step is to deploy the Control Plane Nodes. We deploy three control plan nodes, they are just regular VMs inside the ESXi cluster, spread across three hosts for high availability. we do it by applying anti-affinity rules to avoid two Control Plane Nodes running on the same ESXi. The Control Plane VMs runs few Kubernetes services such as API server, Scheduler, Controller Manager etc. Another important service deployed on the control planes is the ETCD service witch functions as a key value data-store of Kubernetes.

After the Kubernetes Control Plane VMs successfully deployed, We can now expose a kubectl API to our DevOps teams and they can start interact with the supervisor cluster and deploy applications.

for example, they can view the status of the nodes using the command

$ kubectl get nodes

As you can see from the output, we have 3 Kubernetes master nodes which are running as VM’s and 3 ESXi hosts that are now acting as the Kubernetes worker nodes.

A Summary of what we described so far:

With vSphere we also going to add different service along side the Kubernetes service, services like data, registry, security and others. all of them will be managed under the same control plane managed by the same API end point for orchestration and automation

Single Supervisor cluster is equal to a single Kubernetes Cluster. DevOps team might want more than one Kubernetes cluster for other use cases on the same vSphere cluster, but what if we have only one vSphere cluster? For this we enable an option of Tanzu kubernetes grid service, this enables consumption of additional Kubernetes cluster that runs on top of the supervisor cluster as VM’s.

Tanzu Kubernetes Grid Service

TKGs clusters are upstream Kubernetes clusters that run’s inside a dedicated namespace of the supervisor cluster, the main difference between the TKGs cluster and the Supervisor cluster (SC) is the fact that TKGs runs as VMs (nodes and control plans), In contrast to the Supervisor which runs the Kubernetes nodes on the ESXi host’s.

Another point that is worth highlighting is the fact that the Supervisor cluster has a 1:1 relationship to the vSphere cluster, in other words, one vSphere cluster = one Supervisor cluster.

The TKG’s is most likely owned by the DevOps/Developers and the life cycle management of this cluster managed by them, operations like scaling and upgrading the TKG’s cluster is decoupled form the life-cycle of the Supervisor cluster.

How TKG’s cluster is being created? The creation of the TKG’s and the life-cycle management of that after the creation is achieved using the open-source project from the Kubernetes community, Cluster-API. If you want to read more about Cluster API project use the following link: https://github.com/kubernetes-sigs/cluster-api-provider-vsphere

In short, the idea of Cluster-API is to create the Kubernetes cluster from scratch, using the existing Kubernetes cluster, in Tanzu, we leverage the Supervisor cluster to create the TKG’s clusters.

In order to create a TKG’s clusters, we need to create virtual machines, right? but in fresh new Kubernetes, we don’t have any virtual machine objects as you may guess. we leverage Kubernetes capabilities to create new objects Custom Resource Definition, or CRD. now with CRD’s we can create new Kubernetes objects like VirtualMachine or even LogitcalGateway and leverage those objects like known Kubernetes objects – pods, services, etc.

But when a request comes in to create a virtual machine who is actually creating the Virtual Machine in the vCenter? This is a fair question because creating objects inside Kubernetes will not magically deploy VMs from nowhere. This is where Kubernetes Operator kicked-in, the VMware Operator role is to communicate with the IaaS layer (vCenter) and deploy/delete the virtual machine, It is obvious that VMware Operator do more than that, but for simplicity, you can think of VMware Operator as the component that responsible for all the IaaS and lifecycle tasks.

A good example of the lifecycle task can be to scale up the TKG’s cluster. scaling the cluster = adding more worker nodes = deploy a new virtual machine to the Kubernetes cluster. obviously just deploy the VM is not enough, after the VM is deployed, the Operator will need to do all the necessary tasks to make sure this new VM contains all the software components and become part of the Kubernetes cluster.

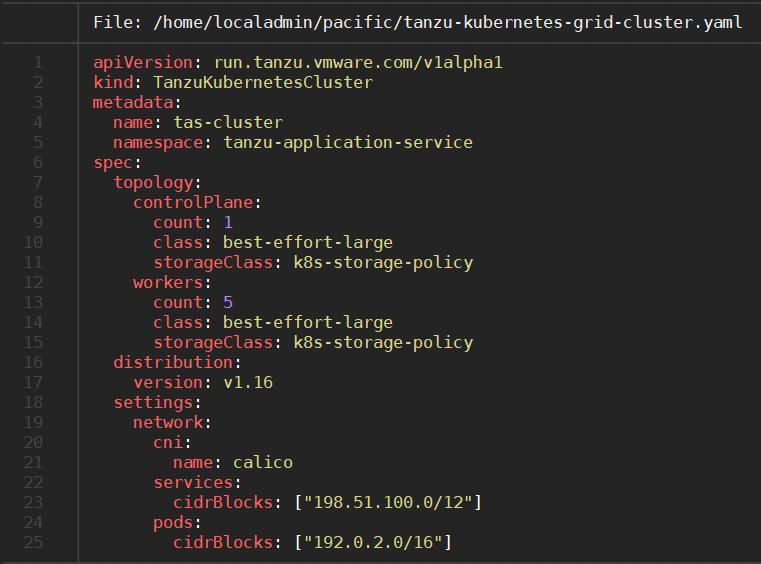

The creation of the TKG’s is being done with a simple YAML file, for example:

Deploying this YAML file into the Supervisor cluster will create new Kubernetes Guest Cluster.

With this blog post I tried to simplify vSphere with Tanzu goal and go over the technical details of the offerings, hopefully now it makes more sense.

One thought on “vSphere with Tanzu”